Interactive Touchfloor - 3D from 2D

Smart Room | Human Computer Interaction | Pressure-Sensitive Floor

Challenge

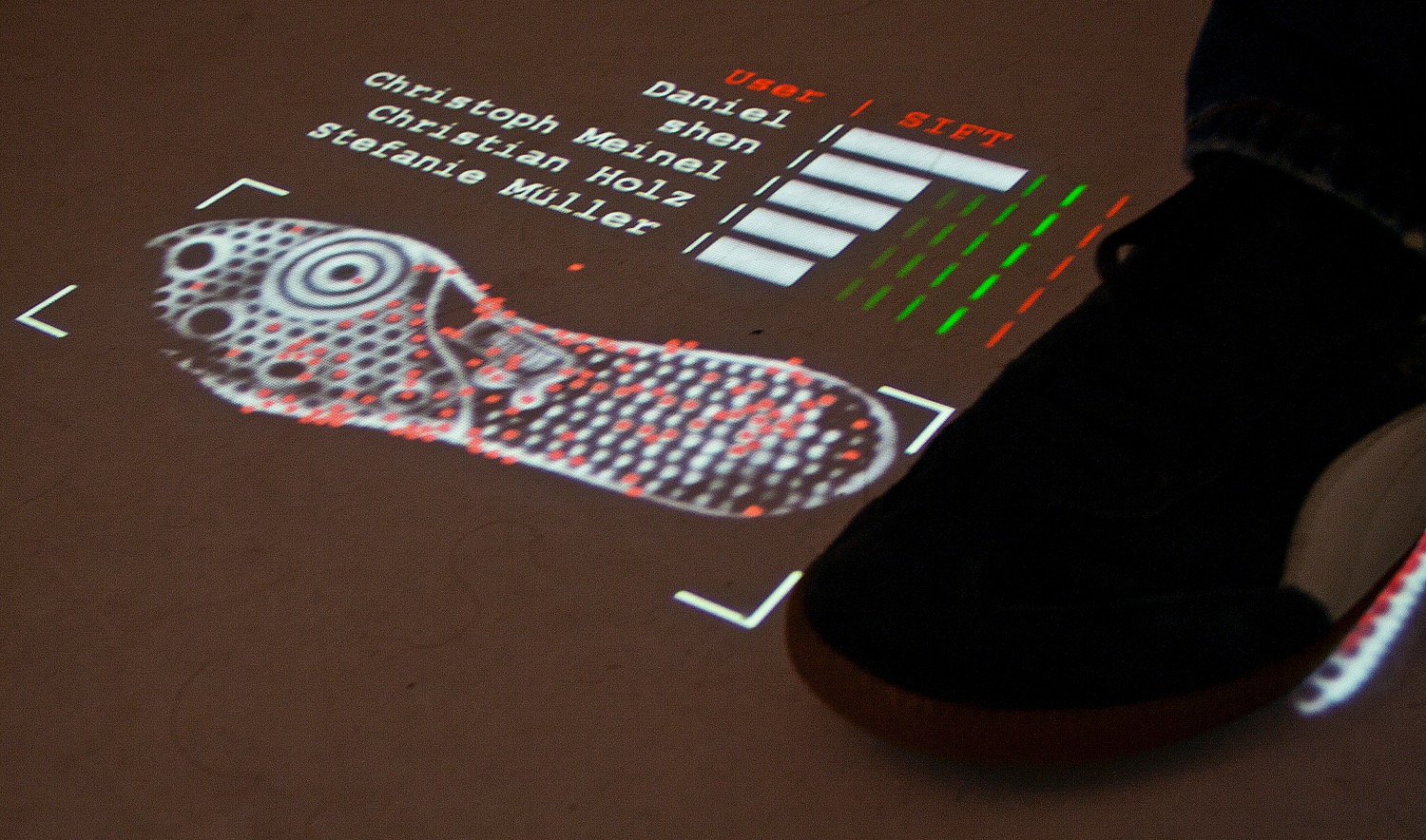

We use touch screens a lot, but why does everyone focus on just the fingers interacting with a touchscreen? This is a Human Computer Interaction (HCI) setting where we explored how we can interact with computers through the floor.

Solution

We build a framework that takes two-dimensional pressure input and tries to translate this into the 3dimensional space, so it reconstructs, e. g., how a person is standing on the floor.

My Role

This was my bachelor project at the HCI-chair of Hasso Plattner Institute. I contributed to the 2D-to-3D framework. Also, I conducted research: Can high-speed cameras track the trajectory of a basketball? When dropping the ball onto the floor (for example when dribbling a basketball) the ball is in contact with the floor for about 12 milliseconds. We took a high-speed camera recording with 240 fps. Given this speed, the camera captures about 4 frames of the basketball contacting the floor. By looking at the shift of the ball imprint over the course of the 4 frames, we can predict an area where the ball will touch the floor next, in case that no human interference takes place. Furthermore, after calibrating we could estimate the height that the ball was dropped from.

Technology

C++, OpenCV, Unreal Development Kit, Blender, Kinect, Motion Capture, Point Grey High-speed camera